How to Implement DevOps: A Step-by-Step Guide for Engineering Teams (2026)

DevOps implementation starts with an honest audit of where your deployments break down, then builds CI/CD, IaC, and observability incrementally — not all at once. Most teams see measurable results within 60 days of starting.

DevOps implementation is one of those things that sounds straightforward on paper and turns out to be genuinely hard in practice. Not because the tools are complicated — most of them are excellent — but because the real work is changing how your team thinks about ownership, feedback loops, and failure.

This guide is the one we wish existed when we started running DevOps engagements. It's opinionated, practical, and skips the philosophy lectures. By the end, you'll know exactly what to do in your first 30 days.

What DevOps Implementation Actually Means

"DevOps" has been stretched to cover everything from a single Jenkins server to a platform engineering department with 40 people. For this guide, we define DevOps implementation as:

Building the systems that allow your engineering team to reliably ship code to production multiple times per week, with automated testing, observable infrastructure, and a fast path from failure to recovery.

That's it. If you can ship multiple times per week with confidence, you've implemented DevOps. Everything else is refinement.

Step 1: Audit Your Current SDLC (Week 1)

Before touching a single tool, map your current deployment process. Specifically, answer these questions:

- How long does it take from a merged PR to code running in production? (Hours, days, or weeks?)

- How many manual steps does a deployment require? (SSH into servers? Click buttons in a console?)

- How do you know when something breaks in production? (Monitoring, or a customer complaint?)

- How long does it take to roll back a bad deployment? (Minutes, or another full deployment?)

- How many environments do you have? (Dev, staging, prod — or just prod?)

Write the answers down. The gaps between "what should happen" and "what actually happens" are your implementation backlog. At Ortem, our discovery call for new DevOps engagements covers exactly these five questions. The answers shape the entire implementation plan.

Step 2: Pick Your Implementation Path (Week 1)

There are three common starting points depending on your current state:

| Starting state | First 30-day focus |

|---|---|

| No CI/CD at all | Build a CI/CD pipeline for your most-deployed service |

| CI but manual deployments | Add automated deployment to staging; then prod |

| CI/CD exists but broken | Fix the most common failure mode before adding anything |

The worst mistake is trying to implement everything at once. Pick one service, one pipeline, one environment. Get it working reliably. Then expand.

Step 3: Set Up CI/CD (Weeks 2–3)

A CI/CD pipeline has two jobs: catch problems before they reach production (CI) and deploy automatically when they don't (CD).

Recommended stack

- GitHub Actions (if you're on GitHub) or GitLab CI — both are excellent, both are free for most use cases

- Docker for containerising your application

- Your cloud provider's container service (ECS, App Service, Cloud Run, or EKS) for deployment

A minimal GitHub Actions pipeline

name: CI/CD Pipeline

on:

push:

branches: [main]

pull_request:

branches: [main]

jobs:

test:

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v4

- name: Install dependencies

run: npm ci

- name: Run tests

run: npm test

- name: Build

run: npm run build

deploy:

needs: test

runs-on: ubuntu-latest

if: github.ref == 'refs/heads/main'

steps:

- uses: actions/checkout@v4

- name: Build Docker image

run: docker build -t myapp:${{ github.sha }} .

- name: Push to ECR

run: |

aws ecr get-login-password | docker login --username AWS --password-stdin $ECR_REGISTRY

docker push $ECR_REGISTRY/myapp:${{ github.sha }}

- name: Deploy to ECS

run: aws ecs update-service --cluster prod --service myapp --force-new-deployment

This is deliberately minimal. Add complexity (blue/green, canary, approval gates) only when the simple version is stable and you understand why you need more.

What "good" looks like

- Every PR triggers automated tests

- Tests run in under 10 minutes (if longer, they won't be run)

- A merged PR deploys to staging automatically

- A staging deployment deploys to production with one click or automatically after a soak period

Step 4: Infrastructure as Code (Weeks 3–4)

Infrastructure as Code (IaC) means your servers, databases, and networking are defined in code files (Terraform, Pulumi, CloudFormation) rather than configured manually through the console.

Why it matters: When a colleague clicks through the AWS console to set up a new environment, that knowledge lives in their head. When you codify it in Terraform, it lives in your repository — versioned, reviewable, and reproducible.

Starting with Terraform

Install the Terraform CLI and start with a single resource — your ECS service, your RDS database, or your S3 bucket. Don't try to Terraform your entire infrastructure on day one.

# main.tf — a minimal ECS service definition

resource "aws_ecs_service" "app" {

name = "my-app"

cluster = aws_ecs_cluster.main.id

task_definition = aws_ecs_task_definition.app.arn

desired_count = 2

load_balancer {

target_group_arn = aws_lb_target_group.app.arn

container_name = "app"

container_port = 3000

}

}

Store your Terraform state in S3 with DynamoDB locking. Never store it locally.

Step 5: Observability (Weeks 4–6)

You cannot fix what you cannot see. Observability is the ability to understand the internal state of your system from its external outputs: logs, metrics, and traces.

The three pillars

- Metrics — numerical measurements over time (CPU, error rate, latency). Tool: Prometheus + Grafana, or Datadog.

- Logs — structured event records. Tool: CloudWatch Logs, Loki, or Papertrail.

- Traces — the path of a single request through your system. Tool: Jaeger, Tempo, or AWS X-Ray.

Most teams start with metrics and logs. Traces come later when you have multiple services and need to understand inter-service latency.

Minimum viable observability

Set up these four dashboards before anything else:

- Error rate — what % of requests return 5xx?

- Latency (p99) — what does the slowest 1% of requests look like?

- Deployment tracking — mark the chart every time you deploy so you can correlate changes with regressions

- Alert on anomalies — page someone when error rate spikes above baseline

Step 6: On-Call & Incident Response (Week 6+)

Automated deployments mean faster shipping, which means faster failure. You need a process for handling incidents before you have them.

A minimal incident response process:

- Alert fires → designated on-call engineer gets paged (PagerDuty or OpsGenie)

- Incident declared → a Slack channel is opened, a lead is assigned

- Mitigation first → rollback or feature flag disable before root cause analysis

- Post-mortem → blameless, focused on system fixes, not people

This is more important than any tool choice. A team that responds to incidents systematically learns from them. A team that scrambles ad hoc repeats them.

The 30-Day DevOps Implementation Timeline

| Week | Milestone |

|---|---|

| 1 | SDLC audit complete. Implementation path chosen. |

| 2 | CI pipeline running on main service. All PRs trigger tests. |

| 3 | Automated deployment to staging. Terraform for core resources. |

| 4 | Automated production deployment. IaC covers all critical infra. |

| 5 | Metrics dashboard live. Error rate and latency tracked. |

| 6 | Alert rules set. On-call rotation defined. First post-mortem process documented. |

Most teams see their deployment frequency double by the end of week 4. Deploy time drops from hours to minutes. Rollback time drops from "full redeployment" to "revert a commit."

Common Mistakes to Avoid

Mistake 1: Building the "perfect" pipeline before using it. Ship a pipeline that works, then improve it based on real pain points.

Mistake 2: Making tests too slow. If tests take 30+ minutes, engineers disable them. Target under 10 minutes for PR-blocking tests.

Mistake 3: Adding Kubernetes too early. Kubernetes is the right tool for orchestrating many services at scale. For a monolith or two-service app, ECS or App Service is simpler and equally production-grade.

Mistake 4: No ownership model. DevOps breaks down when nobody owns the pipeline. Designate a "DevOps owner" for each team — it can be a rotating responsibility, but it must be someone's job.

Mistake 5: Treating IaC as optional. The longer you wait to codify infrastructure, the more console drift accumulates and the harder it becomes. Start Terraforming one resource on day one.

Getting Help with DevOps Implementation

If your team is starting from scratch or a broken CI/CD state, an external DevOps implementation engagement can compress months of learning into weeks. At Ortem Technologies, we run 30-day DevOps implementation sprints that start with an audit and end with a working pipeline, IaC, and observability stack — with full documentation and team handover.

Also see: DevOps Implementation Checklist 2026 | Best DevOps Tools 2026 | Cloud DevOps Best Practices

About Ortem Technologies

Ortem Technologies is a premier custom software, mobile app, and AI development company. We serve enterprise and startup clients across the USA, UK, Australia, Canada, and the Middle East. Our cross-industry expertise spans fintech, healthcare, and logistics, enabling us to deliver scalable, secure, and innovative digital solutions worldwide.

Get the Ortem Tech Digest

Monthly insights on AI, mobile, and software strategy - straight to your inbox. No spam, ever.

About the Author

Director – AI Product Strategy, Development, Sales & Business Development, Ortem Technologies

Praveen Jha is the Director of AI Product Strategy, Development, Sales & Business Development at Ortem Technologies. With deep expertise in technology consulting and enterprise sales, he helps businesses identify the right digital transformation strategies - from mobile and AI solutions to cloud-native platforms. He writes about technology adoption, business growth, and building software partnerships that deliver real ROI.

Frequently Asked Questions

- A basic DevOps implementation — CI/CD, IaC, and observability — takes 4–8 weeks for a focused team. A full enterprise DevOps transformation across multiple product teams typically runs 3–6 months. Both can be broken into weekly milestones.

- Audit your current deployment process. Map every manual step between a developer committing code and that code reaching production. The longest and most error-prone steps are your first automation targets.

- No. Kubernetes is powerful but adds operational complexity. Start with a CI/CD pipeline and simple container deployments (ECS, App Service, Cloud Run). Add Kubernetes when you have multiple services that genuinely need orchestration.

- CI/CD: GitHub Actions or GitLab CI. IaC: Terraform. Containers: Docker + ECS or Kubernetes (EKS/AKS/GKE). Observability: Prometheus + Grafana. Incident management: PagerDuty or OpsGenie. Start with one tool per category and add more as the team grows.

- Yes — and small teams often benefit most. A 3-person engineering team with a solid CI/CD pipeline ships more reliably than a 20-person team deploying manually. DevOps is about removing friction, not adding headcount.

Stay Ahead

Get engineering insights in your inbox

Practical guides on software development, AI, and cloud. No fluff — published when it's worth your time.

Ready to Start Your Project?

Let Ortem Technologies help you build innovative solutions for your business.

You Might Also Like

DevOps Implementation Checklist 2026: 47 Steps to Production-Ready Pipelines

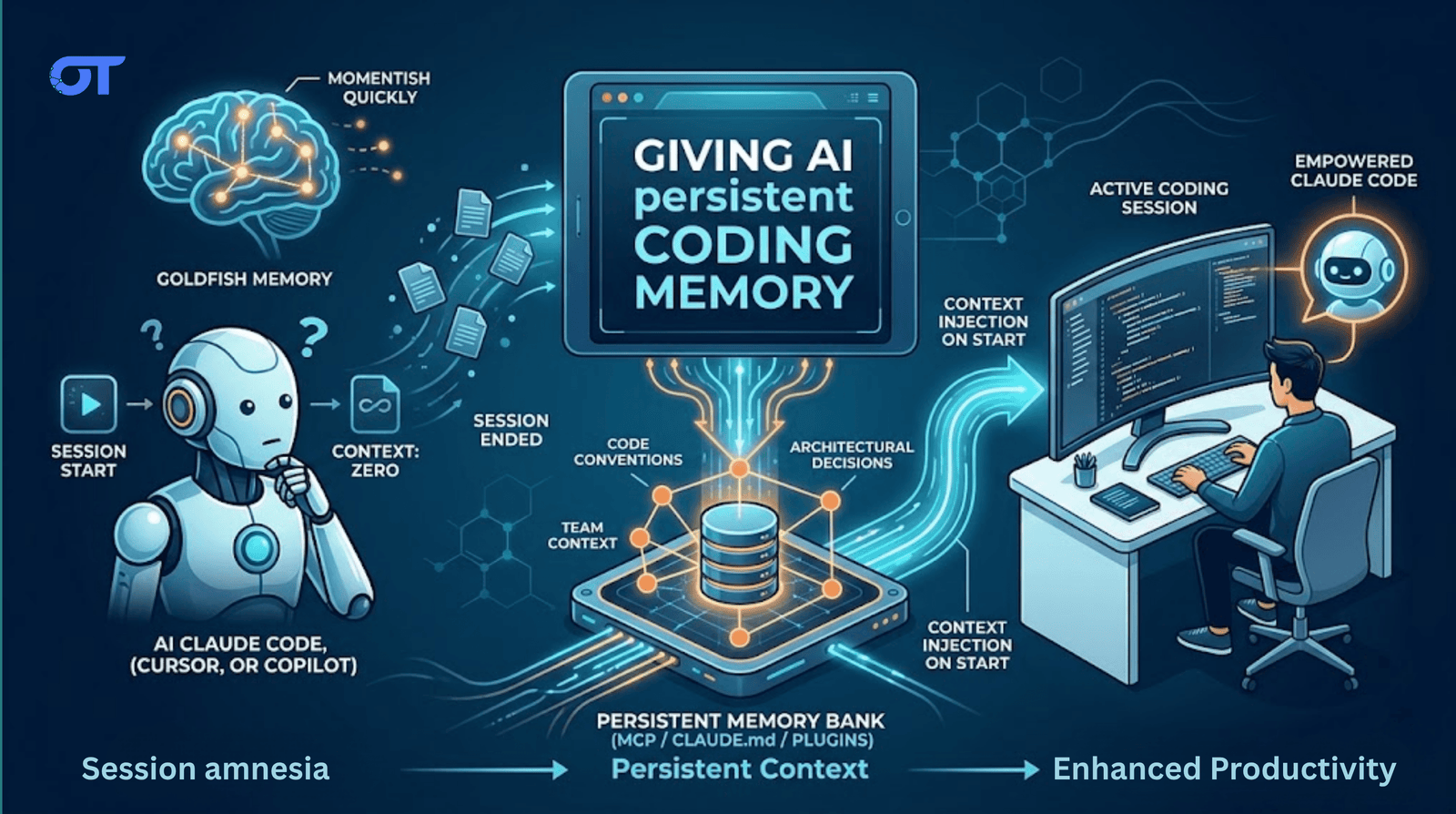

How to Handle Memory in Your AI Coding Setup